Sanjhee Gianchandani

Almost all assessments at all levels have multiple-choice questions or MCQs. Well-designed assessments have MCQs which are carefully constructed, enhance the test-taking experience, and polish the test-takers’ critical and analytical skills.

The structure of an MCQ

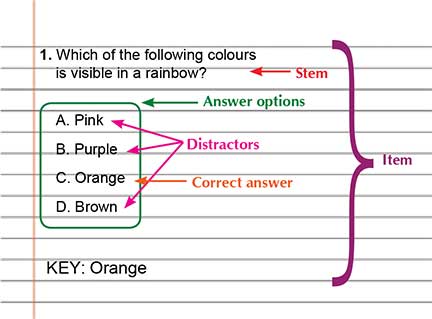

Let us dissect an MCQ and see what it looks like. Consider the following example.

The main question is called the ‘stem’. It may have 2, 3, or 4 ‘answer options’ out of which there is only one ‘correct answer’ and the rest are ‘distractors’. The answer to the MCQ is called its ‘key’. The stem, along with its answer options, and the key is called an ‘item’.

Why do we rely on MCQs?

Since multiple-choice questions are less susceptible to subjectivity, can be assessed easily, and make thinking visible, they are a reliable method of conducting assessments. Multiple-choice questions are short and sharp which means that more of them can be posed in a test situation to give the test-taker a more intensive examination of how much they understand about a given subject. Even the marking of MCQs is less time-consuming and less dependent on manual labour. In the age of machine learning, wherein marking is computer-dependent, MCQs are perhaps the most digital-friendly assessments. Moreover, since it takes less time to complete a multiple-choice question, more material can be covered in a single evaluation. This leads to a broader overview of student understanding, thus increasing the validity of the test.

On the other hand, essay-type questions are easy to set but difficult to assess reliably. They may also not work for a large size of test-takers owing to their subjectivity. Most essay-type questions require human marking based on several different parameters. In such questions, the rubric defines the rigour but in an MCQ-type question, the options define the rigour.

In 1982, Christopher P Sole created the first multiple-choice examinations. Since then, researchers and academicians have been debating whether MCQ is a better choice compared to long-drawn subjective questions. “MCQs open up a window for guesswork as the probability of getting a random answer correct is 25% (assuming four options). Subjective or descriptive questions, on the other hand, expect students to delineate the understanding clearly, but on the downside, rewards those who have a better memory. For subjects or topics which are logical (left-brained) like maths or science, MCQs would fare better while for creative (right-brained) subjects and languages, it would be better to go for subjective questions,” says Vikas Kakwani, founder, AAS Vidyalaya, an online school that helps the less privileged students to continue their education.

Creating effective MCQs

While it may look easy to create an MCQ question, there is a lot of science behind it. Let us look at the features of a good MCQ, their relevance, and analyze some examples and non-examples.

The final word

Educationists say that there cannot be a single indicator of knowledge. It depends on the parameters that one wants to gauge through the assessment. The ability to construct arguments and justify positions on a particular topic or concept can also be tested effectively. Some of these elements are missed out in an objective evaluation,” says Sai Sindhuja, principal, Narayana e-Techno School, Chennai, pointing out that MCQs are useful in evaluating a student’s understanding across multiple concepts quickly. Also, objective exams demonstrate a student’s ability to pick the right answer in the face of choices that look similar, she says. “Informed guesses are intuition at play. We sometimes bridge the gap in recall of knowledge with intuition and this is apparent in MCQ-type exams. In the real world where problems are abstract with no clear parameters, this is an important skill set,” explains Sindhuja, adding that both MCQs and subjective questions are useful tools to evaluate knowledge. A combination of the two along with other assessment formats is what works best.

References

• https://umanitoba.ca/centre-advancement-teaching-learning/support/multiple-choice-questions

• https://www.educationtimes.com/article/campus-beat-college-events/83467859/why-we-need-both-mcqs-and-subjective-questions

The author works as an English language curriculum designer and editor. She has a Master’s degree in English from Lady Shri Ram College for Women, University of Delhi and a CELTA from the University of Cambridge. Her prior experiences include working as an English language assessment specialist, a Writing/Speaking examiner for various international examinations, an item writer, and a content developer for the K-8 segment. She has been empanelled as a consultant editor with various renowned publishing houses and has edited over 100 books ranging from academic materials to fiction, non-fiction, poetry, and children’s writing. Her articles on ELT pedagogy and learning strategies have been published in several educational magazines and blogs. She can be reached at sanjheegianchandani28@gmail.com.